Biography

Benjin Zhu is a Research Scientist at Li Auto and a postdoctoral researcher at Tsinghua University with Prof. Jifeng DAI. He received his Ph.D. from the Department of Electronic Engineering, CUHK in 2025, advised by Prof. Hongsheng LI and Prof. Xiaogang WANG in the MMLab, and his B.Eng. from SCUT in 2018. Before his Ph.D., he was at MEGVII Research with Dr. Gang Yu, Dr. Xiangyu Zhang, and Dr. Jian Sun.

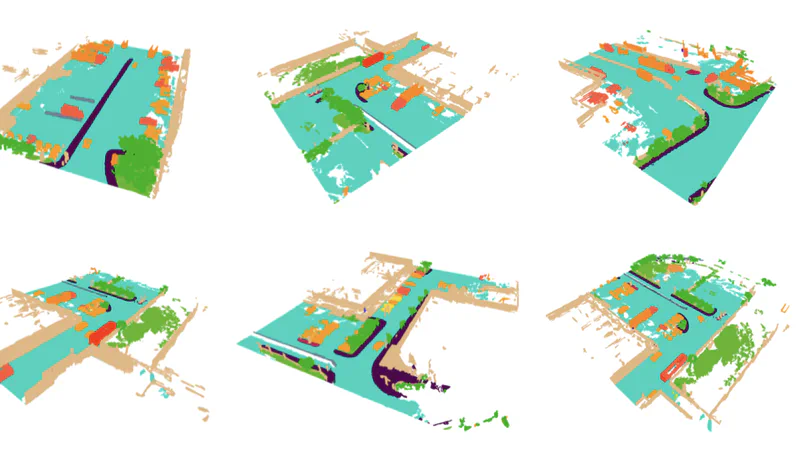

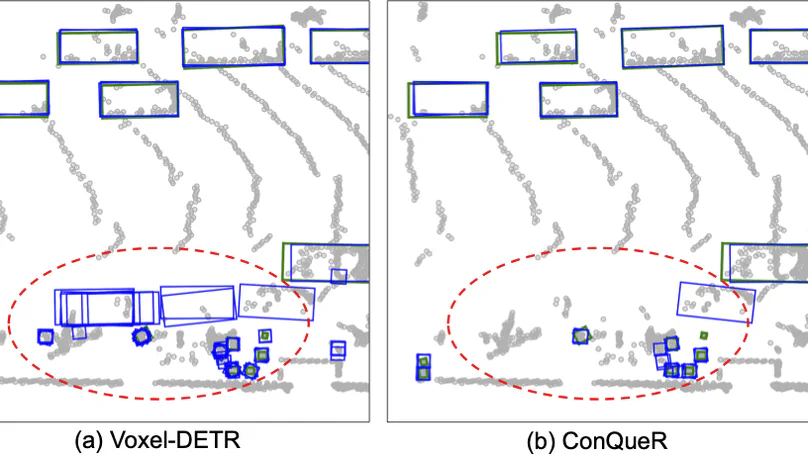

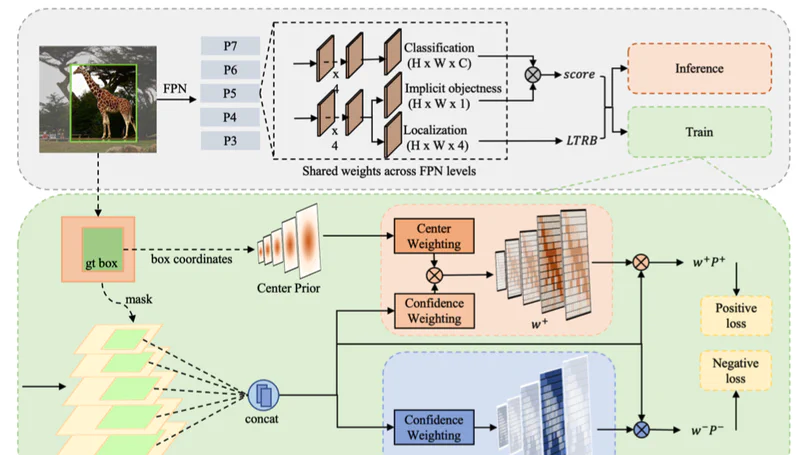

His current research focuses on cross-embodiment VLA and World Models with RL. He led the team that won the inaugural nuScenes 3D Object Detection Challenge at WAD, CVPR 2019, and authored widely-used open-source frameworks Det3D, CVPods, and EFG.

- Embodied AI

- Physical Intelligence

Ph.D in Electronic Engineering, 2021 ~ 2025

The Chinese University of Hong Kong (CUHK)

B.Eng in Software Engineering, 2014 ~ 2018

South China University of Technology (SCUT)

News

- 2026-05 Released the Mind-Omni series — our L4 autonomous driving stack covering VLA, World Models, and RL. ✨

- 2025-06 Graduated from CUHK MMLab and joined Li Auto as a Research Scientist on L4 autonomous driving.